What would you think of when you heard of the word Machine Learning? The go to answer is usually self driving cars, face recognition and serving cat videos in your feed at the right time. But I came across this paper that was published 5 years ago from s group of Google Researchers and was widely shared around the ML community and seems like the things we should think of should be technical debt.

In this article, I would try to summarise what this paper tries to illustrate what this technical debt means under this machine learning context.

Complex Models Erode Boundaries

As model become more and more complex, all the associated code that is related to the model would need to tweaked. A change upstream might ended up affecting something else downstream in a completely different stream of the systems. Also, the consumer of the ML predictions would get silent if things like access controls are not properly implemented, which if something is wrong upstream will further contaminate downstream and makes the debugging process much more challenging.

Data Dependencies

As well all know, machine learning model usually would need some good amount of quality data in order to make things work. In such case, any changes in the data set of the model would need to be taken care of carefully as well. Otherwise if there is some changes in say the business logic, it would be very difficult to turn around as the features that the model is using might be a legacy feature now. Or in some cases there are features that are under utilised and just quietly sits in the model training process and gradually affect the model output.

Feedback Loops

A way to figure if the machine learning model works or not is to way a feedback loop to see how did the model perform. However such feedback loop could be hidden in some cases. The paper suggested a case where an e-commerce page might have two different models, one serving the related products and one serving the related reviews. Although they are two different model with two different feedback loops, there could be a case where the two model are affect each other in a hidden way that one is not aware of if not looked into it closely.

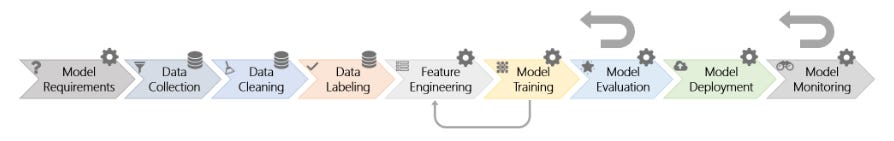

ML-System Anti-Patterns

This refers to the things that people would do to defer the problem later. Things like glue code that you use to transforms your data to fit the package, so many pipelines that it becomes a pipeline jungle, abstraction of models that makes things more and more ambiguous as well as the smells of a poor ML system, like using multiple languages, not able to move code from dev to prod easily, etc. Anti-Patterns are not exclusive to typical software engineering only, machine learning can be the victim too.

Configuration Debt

This is probably a bit more straight forward, which is the long list of configuration that a machine learning model would possess. If such configuration are not carefully versioned, compared and detected, these tiny change in configuration would again might become intertwined across different features and turn the model into a less and less capable one.

Dealing with Changes in the External World

When a machine learning model is built, every would work fine… until the time when things needs to be changed manually. Monitoring and testing these machine learning models is as important as the model accuracy as things like the threshold of a classification model would have to be able to respond to changes, instead of a manually set threshold that would need extra attention which we usually don’t have in times of rushing through delivering other new things.

Other Areas of ML-related Debt

Last but not least is something that didn’t made it into the list above but also very important. Things like, data testing, reproducibility, process management, etc. I am sure the list would goes on and you can imagine how many more things can be discussed.

The bottom line

This article came out 5 years ago, which in that time we didn’t have AlphaGo beating the best Go players in the world, an election swayed Brexit and US election that turns out could be related to how our newsfeed works and more. Things have definitely changed a lot. A field of MLOps are also on the rise with cloud giants like AWS(Sagemaker), Microsoft (Azure MLOps) and GCP (Kubeflow) each giving out their solution to this problem. However if you think a machine learning model is like a magic wand that can solve man kind problems with a flick of the stick, that future are still yet to come. Hope fully in another 5 years we would see more advancements in this area so that data science can be much simpler and accessible to more people. I am on the optimistic side, what about you?